🤖⚠️ 4 MAJOR Risks of AI to Humanity

Hi all,

We got something wrong. After announcing our paid subscription feature on Sunday, we received a lot of feedback that our pricing of $20 a month was simply too high and created a barrier for many of you. I also realize that our paid article had not been released yet, so many of you may not know what you’re getting into.

The primary goal of this newsletter is to make financial literacy accessible to everyone, and even on a paid product, that mission should also ring true. So after careful consideration, we decided to make an adjustment. We are reducing the price of our paid tier to $7.50 a month, and if you subscribe annually, it will be the equivalent of $5 a month (the lowest that Substack will let their authors charge). The readers who already subscribed at $20 a month will receive the difference as a refund.

Our first paid article goes out tomorrow, and you’ll always have the option to subscribe or not. The two free newsletters on Wednesday and Sunday will remain unchanged. Your feedback remains valuable, and I’m grateful for any opportunity to serve you all.

Thank you for your support, and please forgive me for the snafu :)

- Humphrey

The Weekly Brief

Plunging Tax Revenue Accelerates Debt-Ceiling Deadline (WSJ)

The June 1st deadline, where it is estimated the U.S. government would run out of money and need to default on its debt obligations, may need to be accelerated to an earlier date due to disappointing tax revenue. The amount collected fell about $250B short of predictions, meaning the U.S. may run out of cash before mid-June tax payments roll in. This year’s declining tax revenue is spurring urgent talks between the Biden administration and congressional Republicans on how to lift the debt ceiling.

Why Does It Matter?

This could be a sign that federal revenue is becoming more volatile and unpredictable. It is to be seen whether this trend will continue or if it is a result of the pandemic. A default would be devastating and a tighter timeline only puts more stress to push a deal through. Consumer Debt Passes $17T for First Time in History (CNBC)

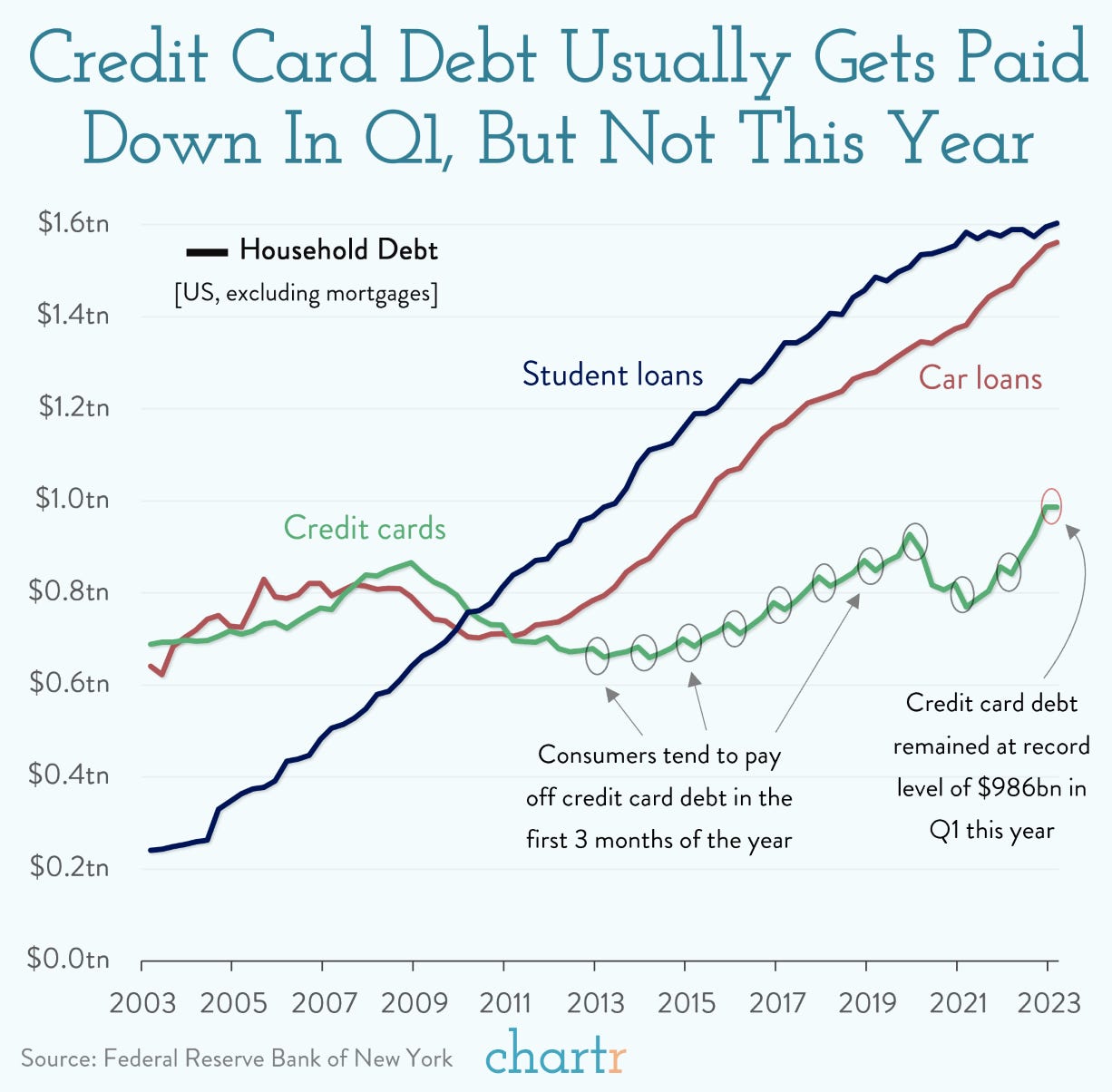

Despite the sharp pullback in mortgage demand, total consumer debt hit a new high in Q1 2023. The $17.05T amount marks an increase of $150B, or 0.9%, during the January to March period. Mortgage originations, including refinancings, were at the lowest level since Q2 2014. Student loan debt and auto loans both edged higher to $1.6T and $1.56T, respectively.

Why Does It Matter?

With rising rates, you'd expect a jump in foreclosures but they've remained low. Delinquency rates for all debt are up slightly though, up 0.2% to 3%, the highest since Q3 2020.Fed Officials Reveal Debate Over Whether to Pause Rate Hike in June (Bloomberg)

Fed officials are debating a pause on rate hikes which have lifted interest rates by five percentage points in a little over a year. One of the Fed’s more hawkish policymakers is suggesting the need to keep raising interest rates to tame inflation, while four others have stressed watching the impact of the existing rate hikes so far.

Why Does It Matter?

Fed officials have been united on the tightening done up until this point, but divisions are starting to emerge on what the next steps are. You’ll Find This Interesting

In this interview with Elon Musk by CNBC, Musk compares OpenAI to a non-profit committed to saving the Amazon rainforest that suddenly turned into a for-profit logging company. Watch the video to learn more about his take on OpenAI!

Hump Days Scoop

Sam Altman, Chief Executive of OpenAI - the company behind ChatGPT, testified before Congress over artificial intelligence concerns. The Biden administration has been increasingly concerned about AI, and there are growing efforts on Capitol Hill to draft new regulations on the technology. This week we look into Altman’s vision and the big risks surrounding AI.

Why was Altman testifying before Congress?

Usually, when big tech CEOs testify before Congress, it is because Congress sees a risk to the American population and wants to gather more information to make more educated policy decisions. Sam Altman is taking a whole different approach. He’s actually used his appearance before a U.S. Senate judiciary subcommittee to urge Congress to impose new rules on big tech. Altman, who has become the global face of AI, is currently in the midst of a month-long international goodwill tour to talk to policymakers about AI and the risks associated with it.

It is quite rare for big tech CEOs to call for more regulation on their own industries, but Altman was quoted in the hearing saying, “If this technology goes wrong, it can go quite wrong.”

What’s so risky about AI?

“Artificial intelligence has the potential to improve nearly every aspect of our lives, but that also creates serious risks,” said Altman at the hearing. We outline four of the most significant risks below.

Job losses due to AI automation. There is a high likelihood that as AI models become smarter, they begin replacing many of the jobs that humans currently work in. It is estimated that 85M jobs are expected to be lost between 2020 and 2025. The flip side is that AI is estimated to create 97M jobs by 2025, but much of the population won’t have the skills needed for these technical roles.

Social manipulation through AI algorithms. Deepfakes have infiltrated political and social spheres allowing bad actors to share misinformation that is difficult to shoot down as inauthentic right away. The nightmare scenario is that deepfakes become nearly impossible to distinguish between authentic and inauthentic.

Widening socioeconomic inequality as a result of AI. AI models have inherent biases baked into the algorithms, which may compromise DEI initiatives through AI-powered recruiting.

Financial crises brought about AI algorithms. Algorithmix trading could be responsible for the next financial crisis, given how quickly the financial sector adopted the technology. For example, these algorithms make thousands of trades at a blistering pace, which could lead to sudden crashes and extreme market volatility.

For more risks of AI systems, read this.

What is Altman’s vision for AI regulation?

Altman laid out a three-point plan on how the U.S. government should regulate companies like his.

Form a new government agency charged with licensing large AI models and create a set of government standards to hold licensees up to with the power to revoke licenses for companies whose models don’t comply.

Create a set of safety standards for AI models, including evaluations of their dangerous capabilities. An example would be that models have to pass certain tests for safety, such as whether they could self-replicate, go rogue and start acting independently.

Require independent audits, by independent experts, of the models’ performance on a set of defined metrics.

Chart of the Week

In Case You Missed It

Partnerships

If you are a brand looking to reach thousands of business leaders and investors through our two weekly newsletters, we would love to hear from you!